Update April 2025: This article are outdated. There is a new and improve LSEG Data Library for Python (aka Data Library version 2) available now. Please see an updated article with the LSEG Data Library on Setting up and run Python Data Science Environment with Jupyter Docker Stacks page.

Another article that might help you is Essential Guide to the Data Libraries - Generations of Python library (EDAPI, RDP, RD, LD) article.

Introduction

The Data Scientists and Financial coders need to interact with various Data Science/Financial development tools such as the Anaconda (or Miniconda) Python distribution platform, the Python programming language, the R programming language, Matplotlib library, Pandas Library, the Jupyter application, and much more.

One of the hardest parts of being Data Developers is the step to set up those tools. You need to install a lot of software and libraries in the correct order to set up your Data Science development environment. The example steps are the following:

- Install Python or Anaconda/Miniconda

- Create a new virtual environment (It is not recommended to install programs into your base environment)

- Install Jupyter

- Install Data Science libraries such as Matplotlib, Pandas, Plotly, Bokeh, etc.

- If you are using R, install R and then its libraries

- If you are using Julia, Install Julia and then its libraries

- ... So on.

If you need to share your code/project with your peers, the task to replicate the above steps in your collogues environment is very complex too.

The good news is you can reduce the effort to set up the workbench with the Docker containerization platform. You may think Docker is for the DevOps or the hardcore Developers only, but the Jupyter Docker Stacks simplifies how to create a ready-to-use Jupyter application with Data Science/Financial libraries in a few commands.

This article is the first part of the series that demonstrates how to set up Jupyter Notebook environment with Docker to consume and display financial data from Refinitiv Data Platform without need to install the steps above. The article covers Jupyter with the Python programming language. If you are using R, please see the second article.

Introduction to Jupyter Docker Stacks

The Jupyter Docker Stacks are a set of ready-to-run Docker images containing Jupyter applications and interactive computing tools with build-in scientific, mathematical, and data analysis libraries pre-installed. With Jupyter Docker Stacks, the setup environment part is reduced to just the following steps:

- Install Docker and sign up for the DockerHub website (free).

- Run a command to pull an image that contains Jupyter and preinstalled packages based on the image type.

- Work with your notebook file

- If you need additional libraries that are not preinstalled with the image, you can create your image with a Dockerfile to install those libraries.

Docker also helps the team share the development environment by letting your peers replicate the same environment easily. You can share the notebooks, Dockerfile, dependencies-list files with your colleagues, then they just run one or two commands to run the same environment.

Jupyter Docker Stacks provide various images for developers based on their requirements such as:

- jupyter/scipy-notebook: Jupyter Notebook/JupyterLab with conda/mamba , ipywidgets, and popular packages from the scientific Python ecosystem (Pandas, Matplotlib, Seaborn, Requests, etc.)

- jupyter/r-notebook: Jupyter Notebook/JupyterLab with R interpreter, IRKernel, and devtools.

- jupyter/datascience-notebook: Everything in jupyter/scipy-notebook and jupyter/r-notebook images with Julia support.

- jupyter/tensorflow-notebook: Everything in jupyter/scipy-notebook image with TensorFlow.

Please see more detail about all image types on Selecting an Image page.

Running the Jupyter Docker Scipy-Notebook Image

You can run the following command to pull a jupyter/scipy-notebook image (tag 70178b8e48d7) and starts a container running a Jupyter Notebook server in your machine.

docker run -p 8888:8888 --name notebook -v <your working directory>:/home/jovyan/work -e JUPYTER_ENABLE_LAB=yes --env-file .env -it jupyter/scipy-notebook:70178b8e48d7

The above command set the following container's options:

- -p 8888:8888: Exposes the server on host port 8888

- -v <your working directory>:/home/jovyan/work: Mounts the working directory on the host as /home/jovyan/work folder in the container to save the files between your host machine and a container.

- -e JUPYTER_ENABLE_LAB=yes: Run JupyterLab instead of the default classic Jupyter Notebook.

- --name notebook: Define a container name as notebook

- -it: enable interactive mode with a pseudo-TTY when running a container

- --env-file .env: Pass a .env file to a container.

Note:

- Docker destroys the container and its data when you remove the container, so you always need the -v option.

- The default notebook username of a container is always jovyan (but you can change it to something else).

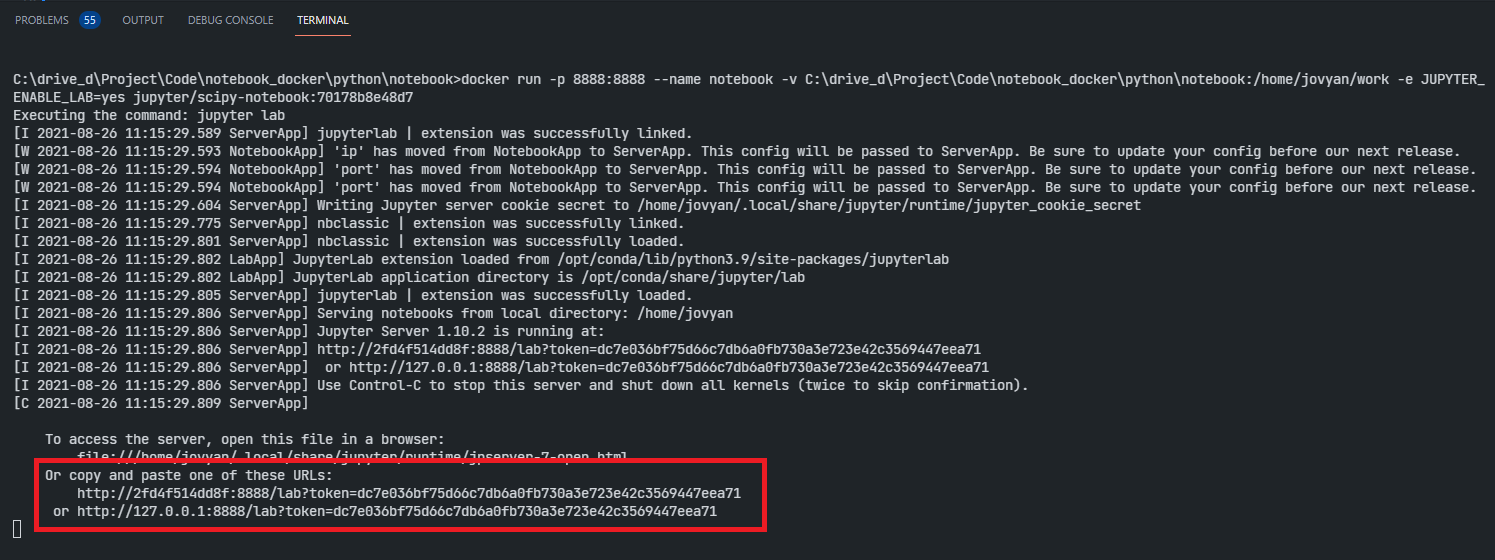

The running result with the notebook server URL information is the following.

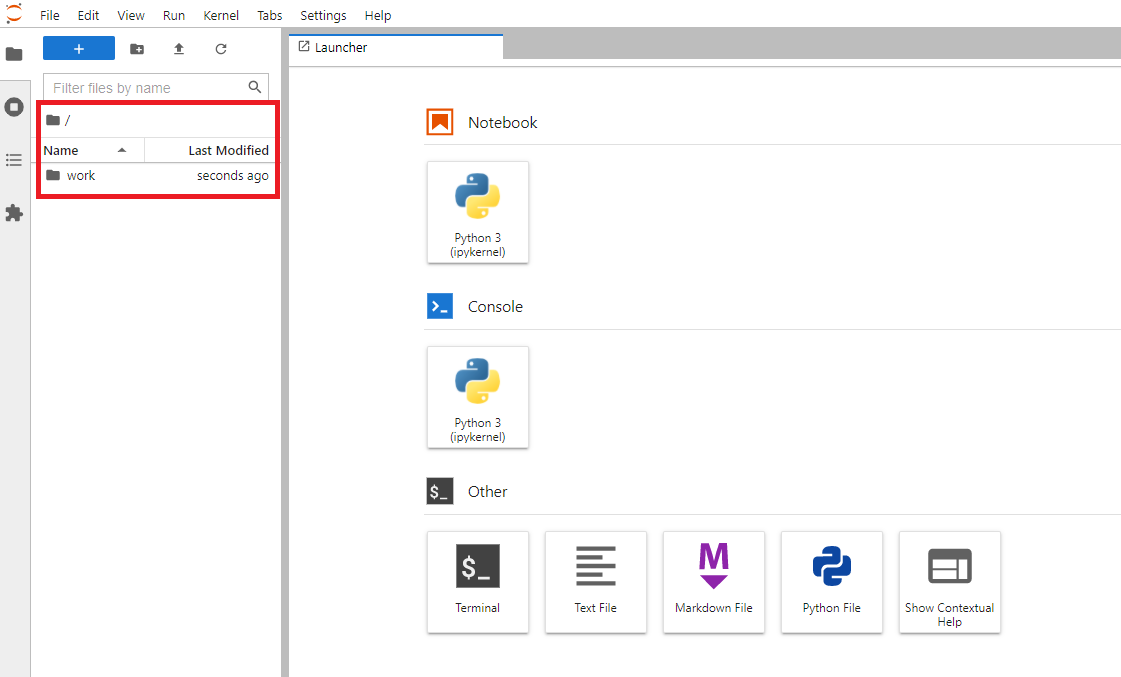

You can access the JupyterLab application by opening the notebook server URL in your browser. It starts with the /home/jovyan/ location. Please note that only the notebooks and files in the work folder can be saved to the host machine (<your working directory> folder).

The files in <your working directory> folder will be available in the JupyterLab application the next time you start a container, so you can work with your files as a normal JupyterLab/Anaconda environment.

To stop the container, just press Ctrl+c keys to exit the container.

Alternatively, you may just run docker stop <container name> to stop the container and docker rm <container name> command remove the container.

docker stop notebook

...

docker rm notebook

Requesting ESG Data from RDP APIs

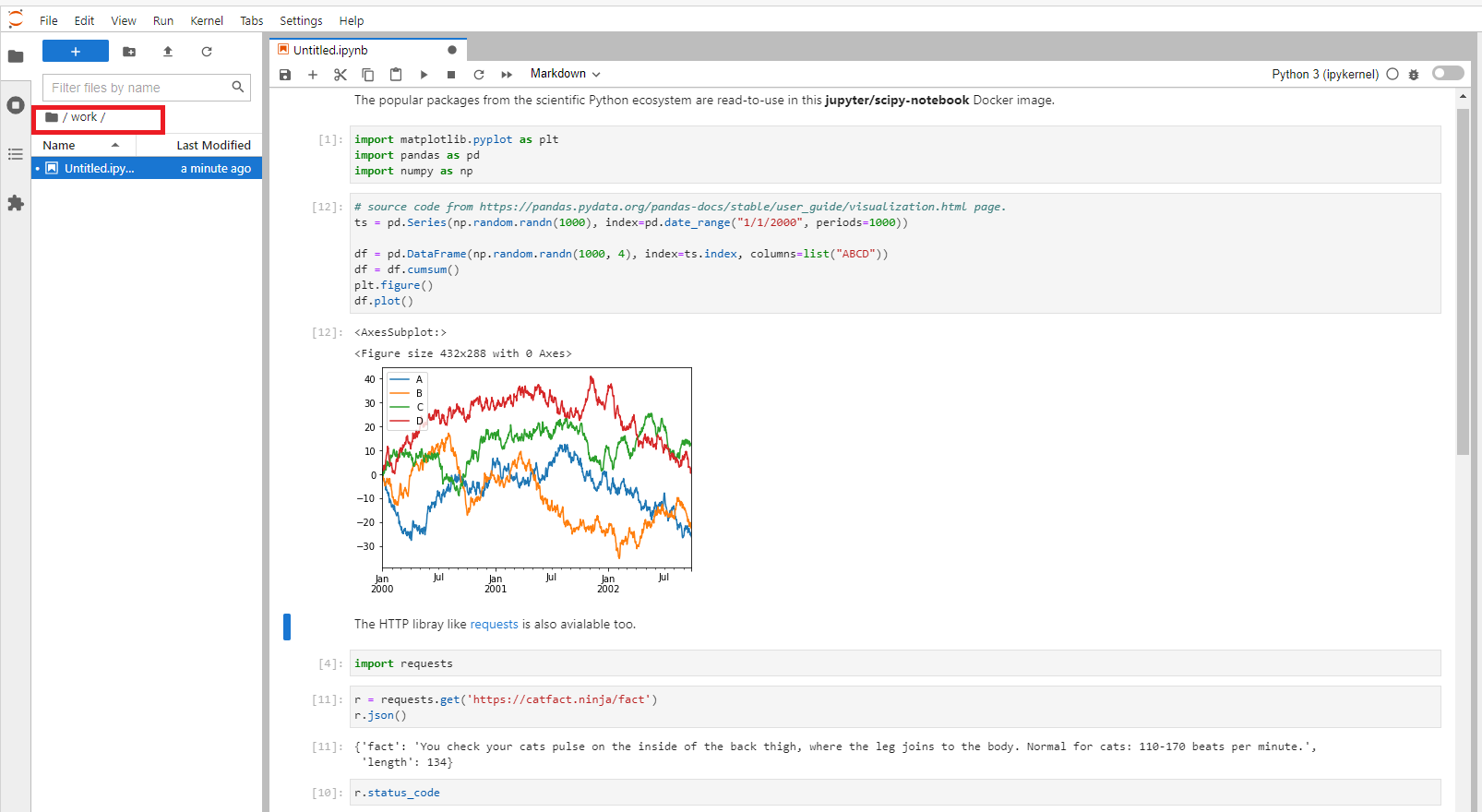

The jupyter/scipy-notebook image is suitable for building a notebook or dashboard with the Delivery Platform APIs (RDP APIs - formerly known as Refinitiv Data Platform) content. You can request data from RDP APIs with the HTTP library, perform data analysis and then plot a graph with built-in Python libraries.

What is RDP APIs?

The Delivery Platform APIs (RDP) provide various LSEG data and content for developers via easy to use Web-based API.

RDP APIs give developers seamless and holistic access to all of the LSEG content such as Historical Pricing, Environmental Social and Governance (ESG), News, Research, etc and commingled with their content, enriching, integrating, and distributing the data through a single interface, delivered wherever they need it. The RDP APIs delivery mechanisms are the following:

- Request - Response: RESTful web service (HTTP GET, POST, PUT or DELETE)

- Alert: delivery is a mechanism to receive asynchronous updates (alerts) to a subscription.

- Bulks: deliver substantial payloads, like the end-of-day pricing data for the whole venue.

- Streaming: deliver real-time delivery of messages.

This example project is focusing on the Request-Response: RESTful web service delivery method only.

For more detail regarding RDP APIs, please see the following APIs resources:

- Quick Start page.

- Tutorials page.

- RDP APIs: Introduction to the Request-Response API page.

- RDP APIs: Authorization - All about tokens page.

The example notebook of this scenario is the rdp_apis_notebook.ipynb example notebook file on the GitHub repository /python/notebook folder. To run this rdp_apis_notebook.ipynb example notebook, you just create a .env file in /python/ folder with the RDP credentials and endpoints information, and then run jupyter/scipy-notebook image to start a Jupyter server with the following command in /python/ folder.

docker run -p 8888:8888 --name notebook -v <project /python/notebook/ directory>:/home/jovyan/work -e JUPYTER_ENABLE_LAB=yes --env-file .env -it jupyter/scipy-notebook:70178b8e48d7

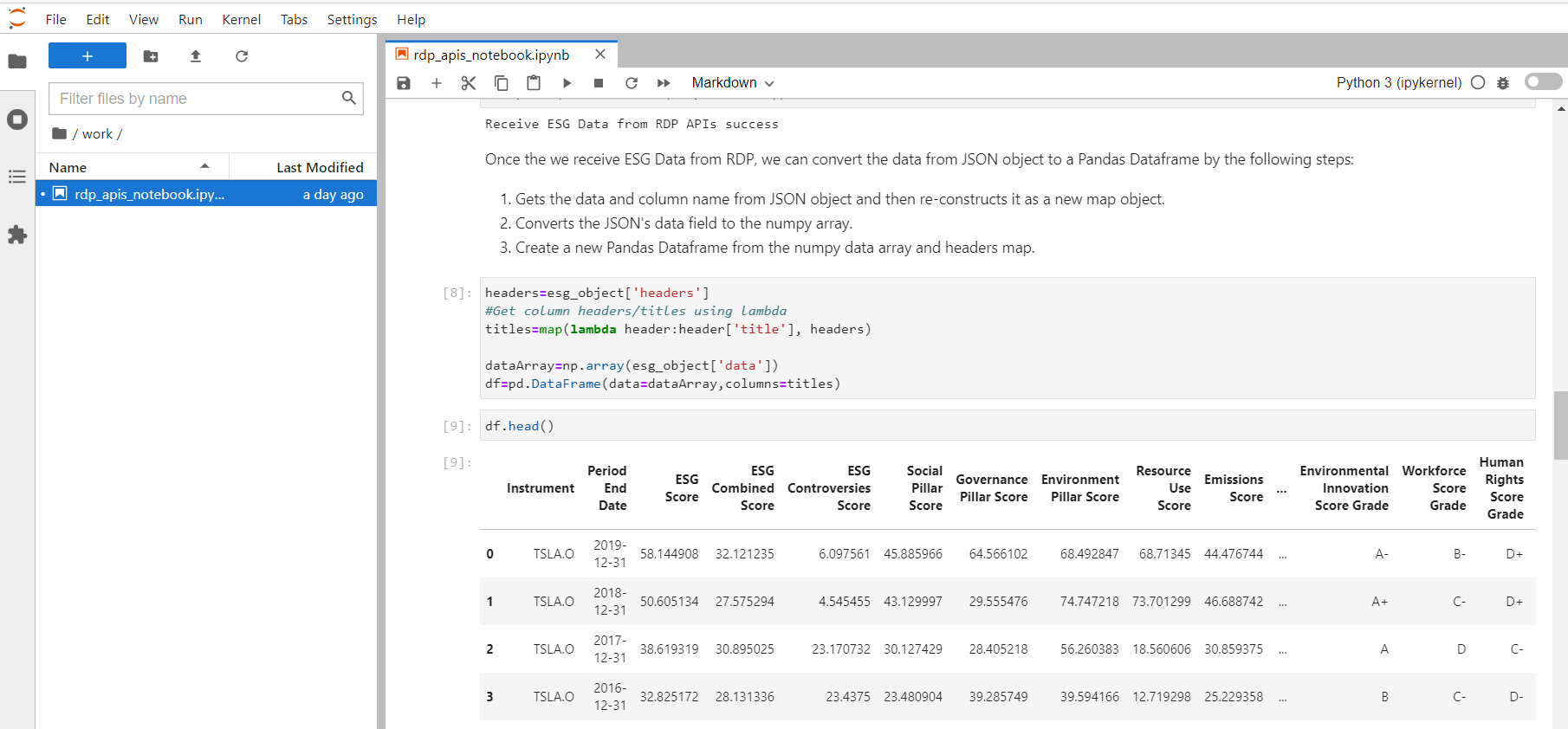

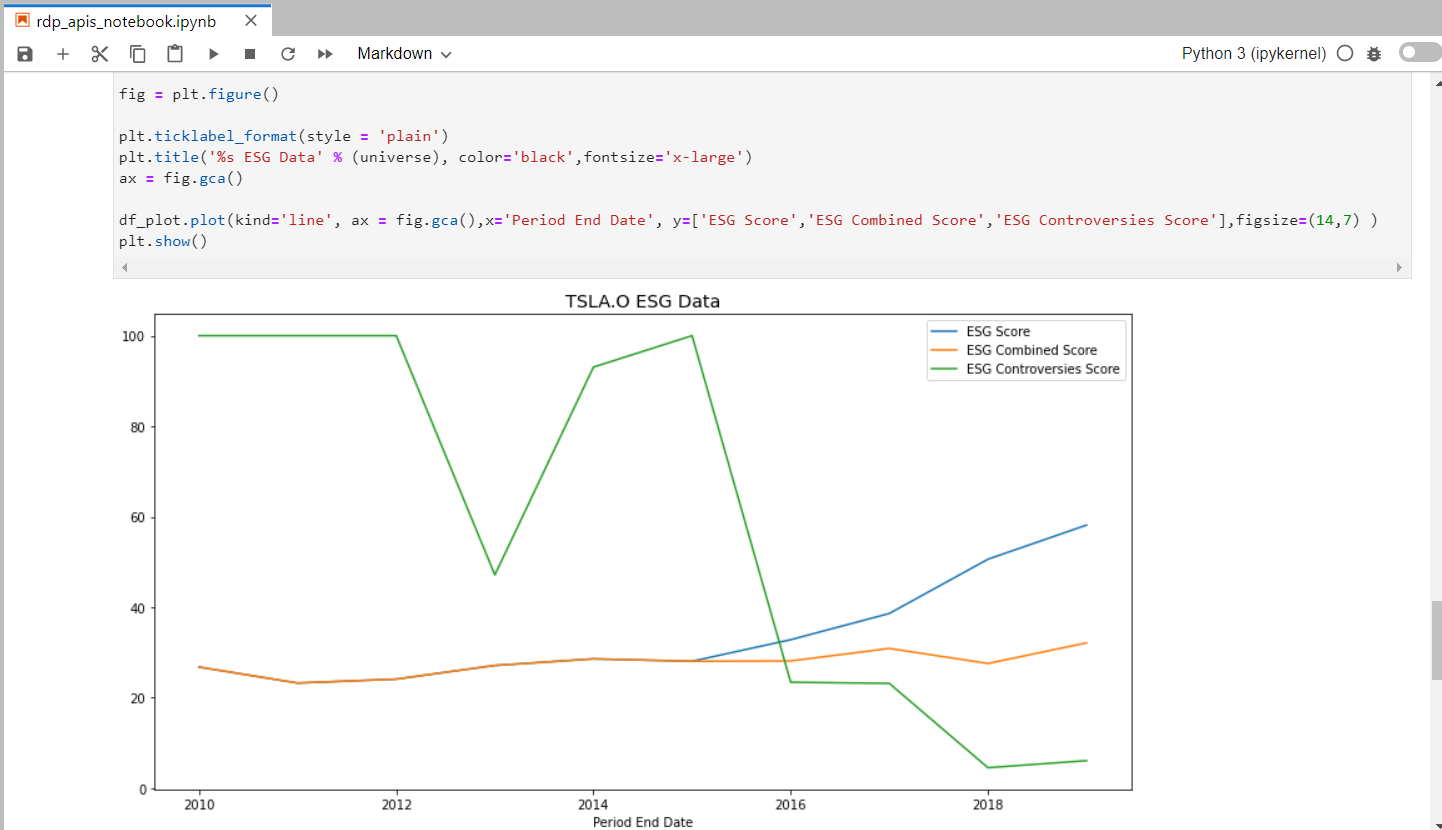

The above command started a container name notebook and mounted /python/notebook/ folder to container's /home/jovyan/work directory. Once you have opened the notebook server URL in a web browser, the rdp_apis_notebook.ipynb example notebook will be available in the work directory of the Jupyter. The rdp_apis_notebook.ipynb example notebook uses the built-in libraries in the image to authenticate with the RDP Auth Service and request Environmental Social and Governance (ESG) data from RDP ESG Service to plot a graph. You can run through each step of the notebook. All activities you have done with the file will be saved for a later run too.

Please see the full detail regarding how to run this example notebook on the How to run the Jupyter Docker Scipy-Notebook section.

The rdp_apis_notebook.ipynb notebook workflow is identical to the example notebook on my How to separate your credentials, secrets, and configurations from your source code with environment variables article. It sends the HTTP request message to the RDP APIs Auth service to get the RDP APIs access token. Once it receives an access token, it requests ESG (Environmental, Social, and Governance) data from the RDP APIs ESG service.

The notebook plots the ESG data chart with the pre-installed matplotlib.pyplot library.

How to change Container User

The Jupyter Docker Stacks images are a Linux container that runs the Jupyter server for you. The default notebook user (nb_user) of the Jupyter server is always jovyan and the home directory is always home/jovyan. However, you can change a notebook user to someone else based on your preference via the following container's options.

docker run -e CHOWN_HOME=yes --user root -e NB_USER=<User> <project /python/notebook/ directory>:/<User>/jovyan/work

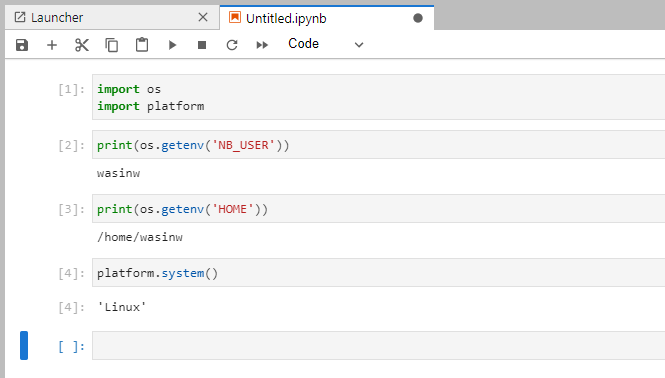

Example with wasinw user.

docker run -p 8888:8888 --name notebook -e CHOWN_HOME=yes --user root -e NB_USER=wasinw -v C:\drive_d\Project\Code\notebook_docker\python\notebook:/home/wasinw/work -e JUPYTER_ENABLE_LAB=yes --env-file .env jupyter/scipy-notebook:70178b8e48d7

Now the notebook user is wasinw and the working directory is /home/wasinw/work folder.

Please note that this example project uses jovyan as a default notebook user.

How to use other Python Libraries

If you are using the libraries that do not come with the jupyter/scipy-notebook Docker image such as the Plotly Python library, you can install them directly via the notebook shell with both pip and conda/mamba tools.

Example with pip:

import sys

!$sys.executable -m pip install plotly

Example with conda:

import sys

!conda install --yes --prefix {sys.prefix} plotly

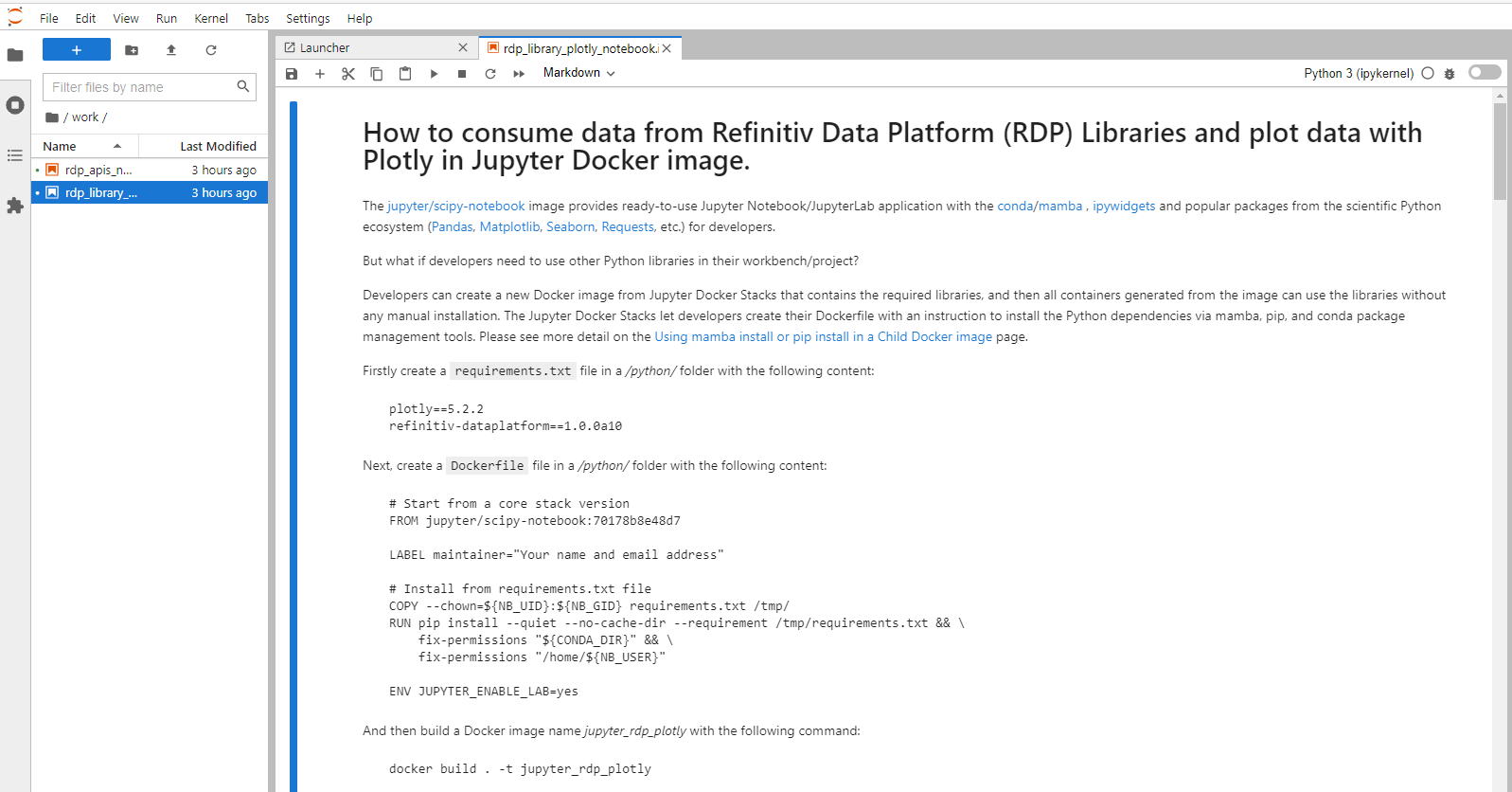

However, this solution installs the package into the currently-running Jupyter kernel which is always destroyed every time you stop a Docker container. A better solution is to create a new Docker image from Jupyter Docker Stacks that contains the required libraries, and then all containers generated from the image can use the libraries without any manual installation.

The Jupyter Docker Stacks let developers create their Dockerfile with an instruction to install the Python dependencies via mamba, pip, and conda package management tools. Please see more detail on the Using mamba install or pip install in a Child Docker image page.

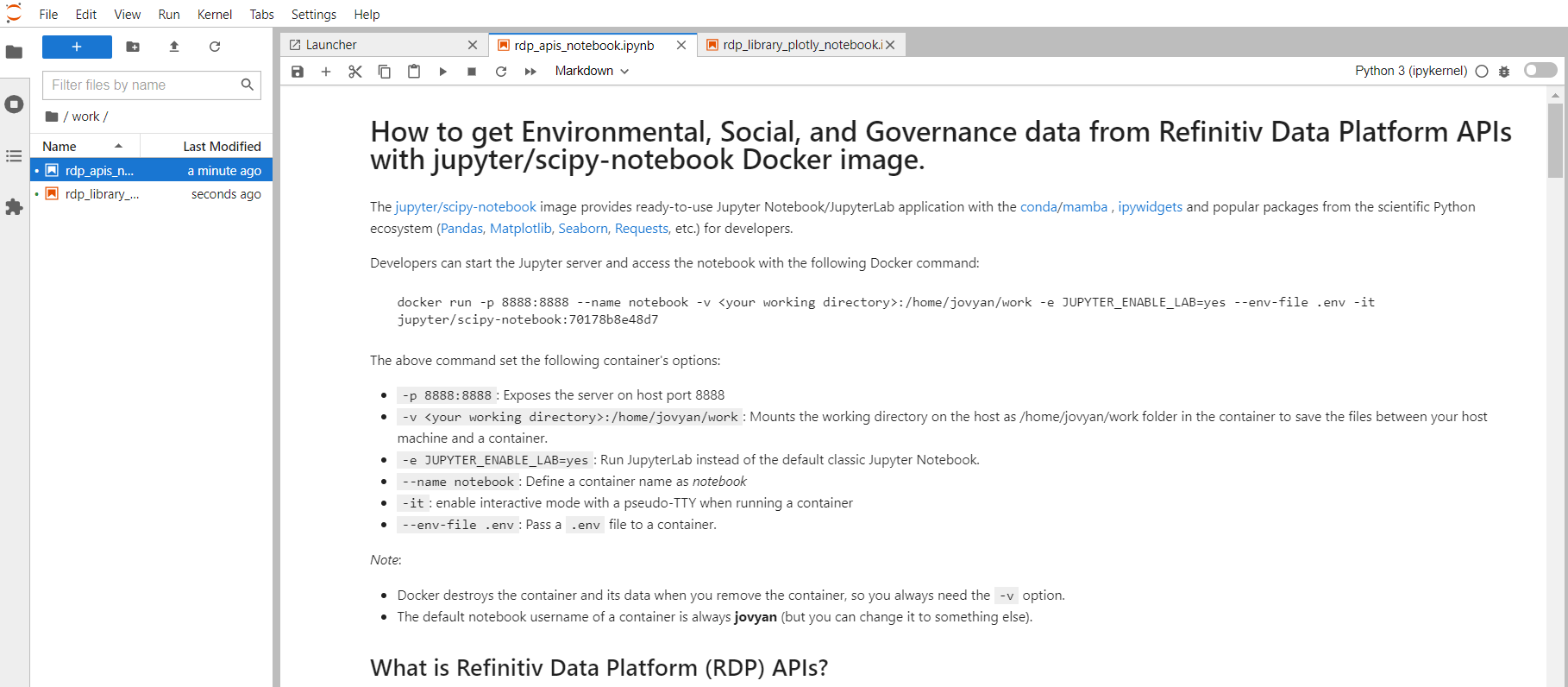

Example with LSEG Data via Data Platform Library and Plotly

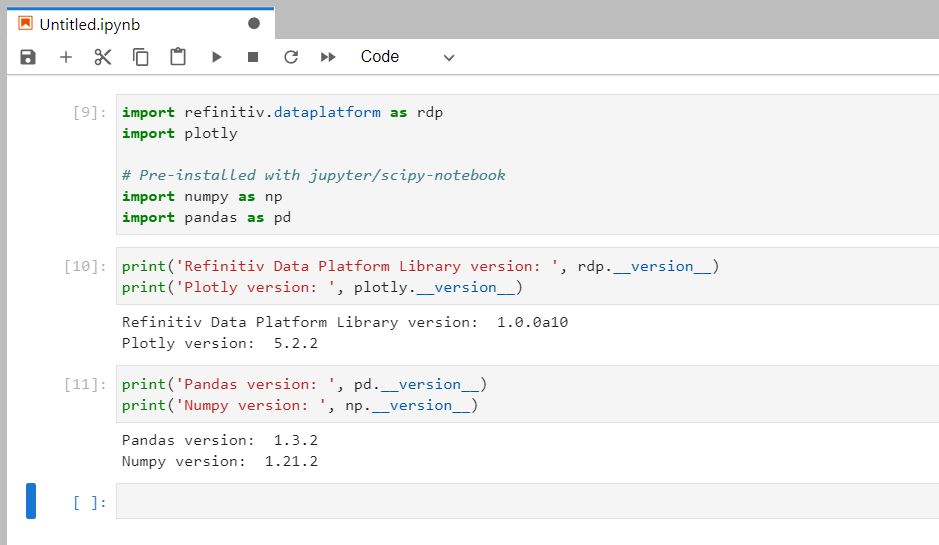

Let's demonstrate with the Data Platform Library for Python (RDP Libraries for Python) and Plotly libraries.

Introduction to Data Platform (RDP) Libraries

Update March 2025: There is a new and improve LSEG Data Library for Python (Data Library version 2) available now. Please find more detail on Essential Guide to the Data Libraries - Generations of Python library (EDAPI, RDP, RD, LD) article.

This article is based on the Data Library version beta (RDP Libraries).

LSEG provides a wide range of contents and data which require multiple technologies, delivery mechanisms, data formats, and multiple APIs to access each content. The RDP Libraries are a suite of ease-of-use interfaces providing unified access to streaming and non-streaming data services offered within the RDP APIs. The Libraries simplified how to access data to various delivery modes such as Request-Response, Streaming, Bulk File, and Queues via a single library.

For more deep detail regarding the RDP Libraries, please refer to the following articles and tutorials:

- Developer Article: Discover our RDP Libraries part 1.

- Developer Article: Discover our RDP Libraries part 2.

- RDP Libraries Document: An Introduction page.

Disclaimer

As this example project has been tested on alpha versions 1.0.0.a10 of the Python library, the method signatures, data formats, etc are subject to change.

Firstly create a requirements.txt file in a /python/ folder with the following content:

plotly==5.2.2

refinitiv-dataplatform==1.0.0a10

Next, create a Dockerfile file in a /python/ folder with the following content:

# Start from a core stack version

FROM jupyter/scipy-notebook:70178b8e48d7

LABEL maintainer="Your name and email address"

# Install from requirements.txt file

COPY --chown=${NB_UID}:${NB_GID} requirements.txt /tmp/

RUN pip install --quiet --no-cache-dir --requirement /tmp/requirements.txt && \

fix-permissions "${CONDA_DIR}" && \

fix-permissions "/home/${NB_USER}"

ENV JUPYTER_ENABLE_LAB=yes

Please noticed that a Dockerfile set ENV JUPYTER_ENABLE_LAB=yes environment variable, so all containers that are generated from this image will run the JupyterLab application by default.

And then build a Docker image name jupyter_rdp_plotly with the following command:

docker build . -t jupyter_rdp_plotly

Once the Docker image is built successfully, you can the following command to starts a container running a Jupyter Notebook server with all Python libraries that are defined in a requirements.txt file and jupyter/scipy-notebook in your machine.

docker run -p 8888:8888 --name notebook -v <project /python/notebook/ directory>:/home/jovyan/work --env-file .env -it jupyter_rdp_plotly

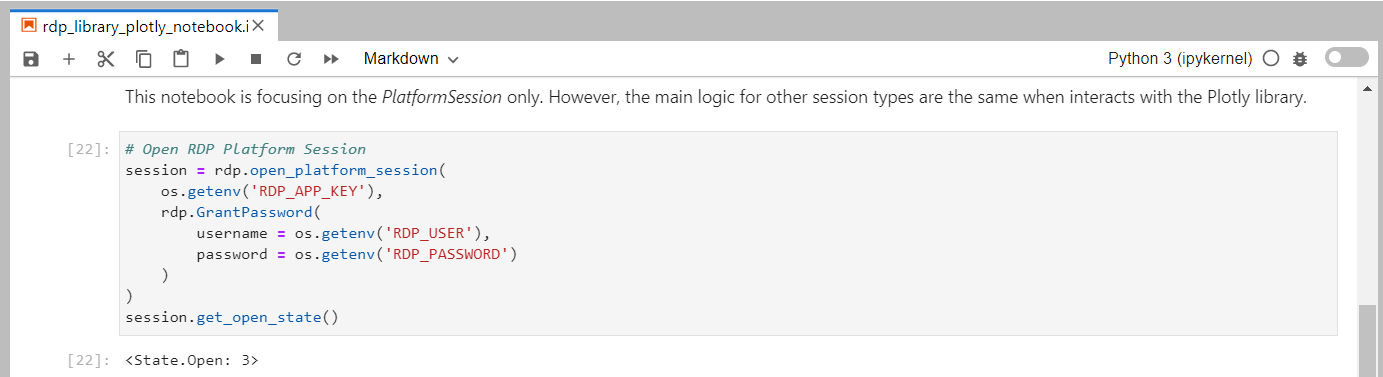

Please be noticed that all credentials have been passed to the Jupyter server's environment variables via Docker run -env-file .env option, so the notebook can access those configurations via os.getenv() method. Developers do not need to keep credentials information in the notebook source code.

Then you can start to create notebook applications that consume content from Refinitiv with the RDP Library API, and then plot data with the Plotly library. Please see more detail in the rdp_library_plotly_notebook.ipynb example notebook file on the GitHub repository /python/notebook folder. Please see the full detail regarding how to run this example notebook on the How to build and run the Jupyter Docker Scipy-Notebook customize's image with RDP Library for Python and Plotly section.

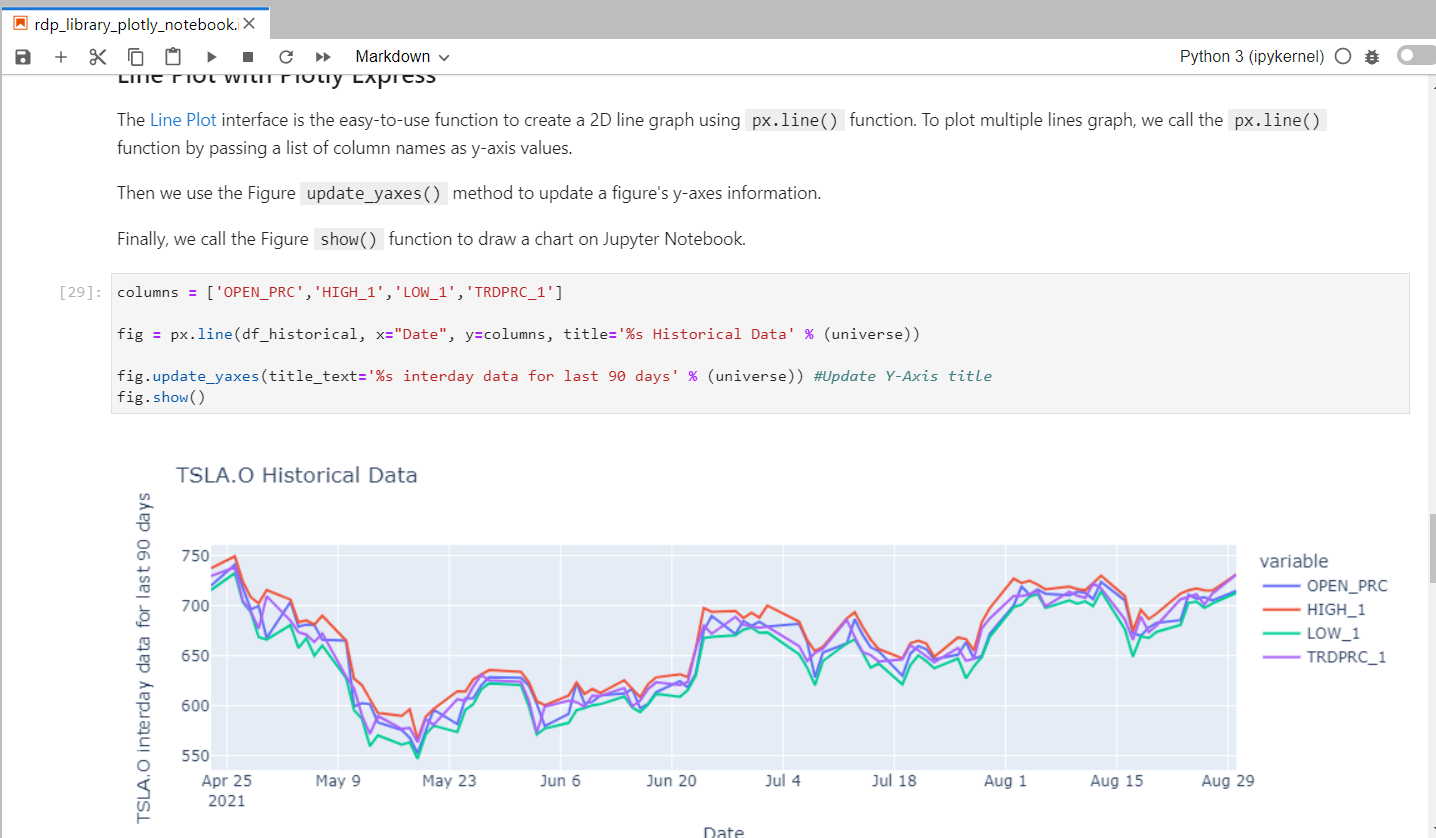

The rdp_library_plotly_notebook.ipynb workflow starts by initializing the RDP session.

Then the notebook requests the historical data from the RDP platform using RDP Libraries Function Layer, and plots that historical data to be a multi-lines graph with the Plotly express object.

Caution: You should add .env (and .env.example), Jupyter checkpoints, cache, config, etc. files to the .dockerignore file to avoid adding them to a public Docker Hub repository.

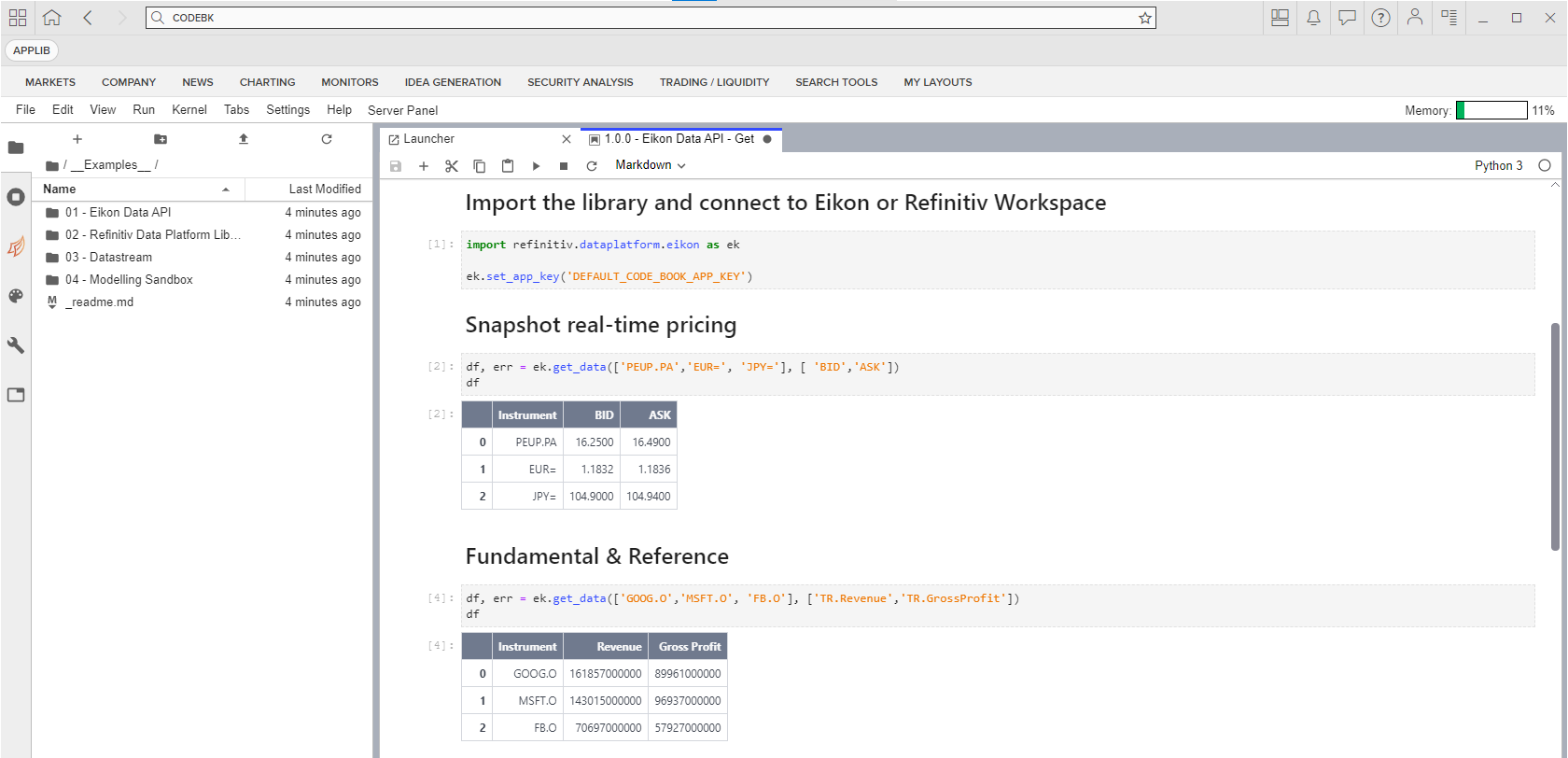

What if I use Eikon Data API?

If you are using the Eikon Data API (aka DAPI), the Jupyter Docker Stacks are not for you. The Refinitiv Workspace/Eikon application integrates a Data API proxy that acts as an interface between the Eikon Data API Python library and the Eikon Data Platform. For this reason, the Refinitiv Workspace/Eikon application must be running in the same machine that running the Eikon Data API, and the Refinitiv Workspace/Eikon application does not support Docker.

However, you can access the CodeBook, the cloud-hosted Jupyter Notebook development environment for Python scripting from the application. The CodeBook is natively available in Refinitiv Workspace and Eikon as an app (no installation required!!), providing access to Refinitiv APIs and other popular Python libraries that are already pre-installed on the cloud. The list of pre-installed libraries is available in the Codebook's Libraries&Extensions.md file.

Please see more detail regarding the CodeBook app in this Use Eikon Data API or RDP Library in Python in CodeBook on Web Browser article.

Demo prerequisite

This example requires the following dependencies software and libraries.

- RDP Access credentials.

- Docker Desktop/Engine version 20.10.x

- DockerHub account (free subscription).

- Internet connection.

Please contact your LSEG's representative to help you to access the RDP account and services. You can find more detail regarding the RDP access credentials set up from the Getting Start with Data Platform article article.

How to run the Examples

The first step is to unzip or download the example project folder into a directory of your choice, then set up Python or R Docker environments based on your preference.

Caution: You should not share a .env file to your peers or commit/push it to the version control. You should add the file to the .gitignore file to avoid adding it to version control or public repository accidentally.

How to run the Jupyter Docker Scipy-Notebook

Firstly, open the project folder in the command prompt and go to the python subfolder. Then create a file name .env in that folder with the following content:

# RDP Core Credentials

RDP_USER=<Your RDP User>

RDP_PASSWORD=<Your RDP Password>

RDP_APP_KEY=<Your RDP App Key>

# RDP Core Endpoints

RDP_BASE_URL=https://api.refinitiv.com

RDP_AUTH_URL=/auth/oauth2/v1/token

RDP_ESG_URL=/data/environmental-social-governance/v2/views/scores-full

Run the following Docker run command in a command prompt to pull a Jupyter Docker Scipy-Notebook image and run its container.

docker run -p 8888:8888 --name notebook -v <project /python/notebook/ directory>:/home/jovyan/work -e JUPYTER_ENABLE_LAB=yes --env-file .env -it jupyter/scipy-notebook:70178b8e48d7

The Jupyter Docker Scipy-Notebook will run the Jupyter server and print the server URL in a console, click on that URL to open the JupyterLab application in the web browser.

Finally, open the work folder and open rdp_apis_notebook.ipynb example notebook file, then run through each notebook cell.

How to build and run the Jupyter Docker Scipy-Notebook customized image with RDP Library for Python and Plotly

Firstly, open the project folder in the command prompt and go to the python subfolder. Then create a file name .env in that folder with the following content. You can skip this step if you already did it in the Scipy-Notebook run section above.

# RDP Core Credentials

RDP_USER=<Your RDP User>

RDP_PASSWORD=<Your RDP Password>

RDP_APP_KEY=<Your RDP App Key>

Run the following Docker build command to build the Docker Image name jupyter_rdp_plotly:

docker build . -t jupyter_rdp_plotly

Once Docker build the image success, run the following command to start a container

docker run -p 8888:8888 --name notebook -v <project /python/notebook/ directory>:/home/jovyan/work --env-file .env -it jupyter_rdp_plotly

The jupyter_rdp_plotly container will run the Jupyter server and print the server URL in a console, click on that URL to open the JupyterLab application in the web browser.

Open the work folder and open rdp_library_plotly_notebook.ipynb example notebook file, then run through each notebook cell.

Conclusion

Docker is an open containerization platform for developing, testing, deploying, and running any software application. The Jupyter Docker Stacks provide a ready-to-use and consistent development environment for Data Scientists, Financial coders, and their teams. Developers do not need to set up their environment/workbench (Anaconda, Virtual Environment, Jupyter installation, etc.) manually which is the most complex task for them anymore. Developers can just run a single command to start the Jupyter notebook server from Jupyter Docker Stacks and continue their work.

The Jupyter Docker Stacks already contain a handful of libraries for Data Science/Financial development for various requirements (Python, R, Machine Learning, and much more). If developers need additional libraries, Jupyter Docker Stacks let developers create their Dockerfile with an instruction to install those dependencies. All containers generated from the customized image can use the libraries without any manual installation.

References

You can find more details regarding the Data Library, Plotly, Jupyter Docker Stacks, and related technologies for this notebook from the following resources:

- LSEG Data Library for Python on the LSEG Developer Community website.

- Plotly Official page.

- Plotly Python page.

- Plotly Express page

- Plotly Graph Objects page

- Jupyter Docker Stacks page

- Jupyter Docker Stack on DockerHub website.

- Setting up and run Python Data Science Environment with Jupyter Docker Stacks article.

For any questions related to this article, please use the following forums on the Developers Community Q&A page.