Disclaimer

In order to reproduce the sample below, you will need to have valid credentials for LSEG Tick History. The source code in this article was simplified for illustration purposes, you can find the full project here

Overview

With the changing technology and world that is becoming more and more automated, there is more competition for cutting-edge technology and an industry-leading compliance and surveillance system which will get you comprehensive data to help your internal teams, front office traders find opportunities. Our Tick History product offering and scenarios will help ensure:

- Trading’s reliability to the data source at 1 single point.

- Provide broad, fast coverage.

- Offer historic data which is a critical reference in the risk management process

This will help you build a more efficient, scalable, and intelligent system to identify trading opportunities and enhance their competitive advantage.

Our tick history offering will provide you with real-time pricing data from more than 500 trading venues and third-party contributors, understand risk positions, ensuring compliance and trade surveillance around data usage.

Benefits include:

- Rapid access to the data through integrated platforms of API / UI.

- Easy access to accurate information, from global data exchanges.

- On-demand access to normalized, global, cross-asset data via a single connection

Targeted audiences

- Head of Digital

- Head of Investment Product

- Buy-side Head of Trading / Trader

- Head of Data Science

- Head of Quantitative Research

- Portfolio Manager

- Head of Commodity Trading

- Head of Trading

The Use Case can be any of the below that suits your portfolio:

- Quantitative research & Back testing.

- Analytics.

- Market surveillance.

- Regulatory compliance.

The LSEG tick history

LSEG Tick History provides superior coverage of complete, timely, and global nanosecond tick data. This enables you to download the data you need when you need it, via a single interface.

Coverage goes back as far as 1996 with a standardized naming convention based on RIC symbology that helps lower your data management cost.

Captured from real-time data feeds, Tick History offers global tick data since 1996 across all asset classes, covering both OTC and exchange-traded instruments from 500+ exchanges and third-party contributor data, the majority available two hours after markets close.

More details about the product can be found here

Tick History product provides you with the below templates:

- Pricing Data

- End of Day Pricing

- Tick History

- Time and Sales (L1)

- Market depth

- Intraday summaries (bars)

- Raw (MP, MBO, MBP)

- Reference Data

- Terms and Conditions

- Corporate Actions

- Standard Events

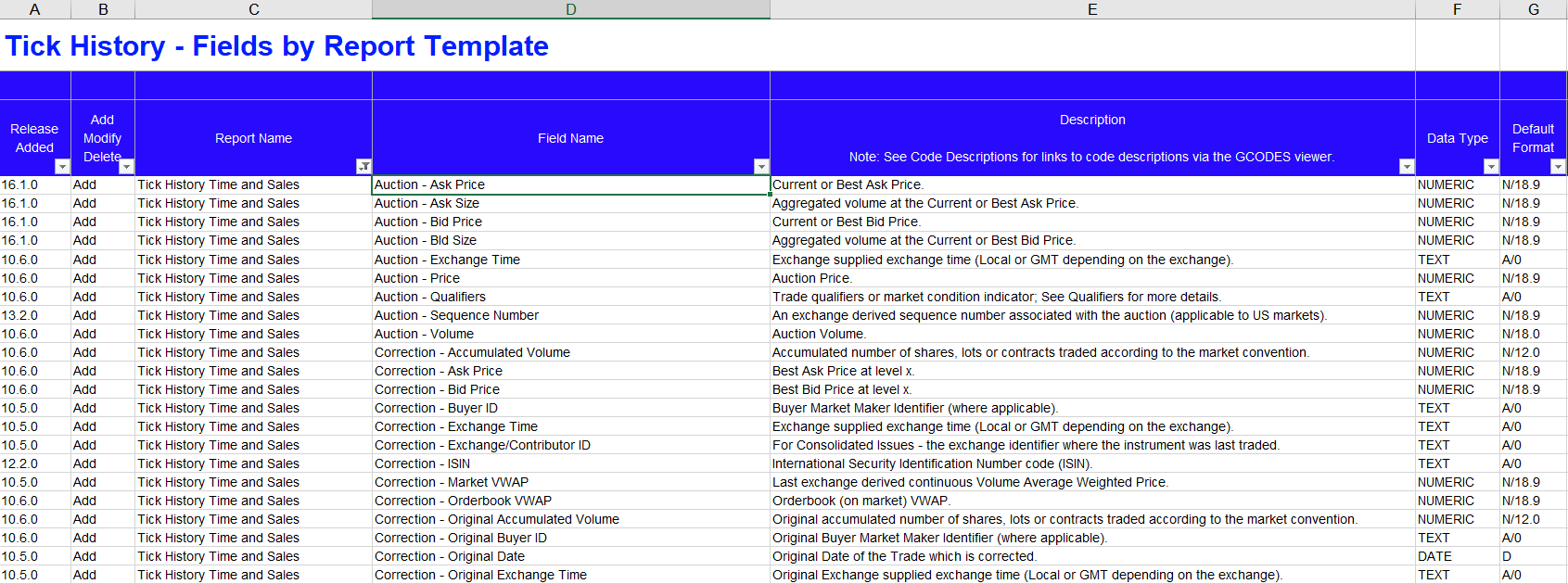

LSEG Tick History Custom Extractions - Data Content Guide

This document identifies the data available from Tick History Custom Extractions offering. Fields are organized by report template. It can be downloaded here (login required) at Support materials > User Guides & Manuals > LSEG Tick History Custom Extractions - Data Content Guide

Pre-requisites:

- A list of portfolios with instruments / RICs as instrument lists will be created/updated.

- Timeframe/duration of the extractions for historical tick-by-tick data is selected/identified.

- We are going to use a CSV file as input, which will consist of two columns in your instrument list. Nevertheless, historical search options based on the names, etc. can be used by date ranges.

- The “Tick History Time and Sales” template is being used in the extractions which provides the option to customize the fields based on Auction, Correction, Quotes, and Trades.

Sample instrument/file code to extract based on the entire market for HK considered as below:

- RIC,0005.HK

List of fields used:

- Auction - Ask Price

- Auction - Bid Price

- Auction - Price

- Correction - Ask Price

- Correction - Bid Price

- Correction - Original Price

- Correction - Price

- Quote - Ask Price

- Quote - Bench Price

- Quote - Bid Price

- Quote - Fair Price

- Quote - Far Clearing Price

- Quote - Freight Price

- Quote - Invoice Price

- Quote - Lower Limit Price

- Quote - Mid Price

- Quote - Near Clearing Price

- Quote - Price

- Quote - Reference Price

- Quote - Theoretical Price

- Quote - Theoretical Price Ask

- Quote - Theoretical Price Bid

- Quote - Theoretical Price Mid

- Quote - Upper Limit Price

- Settlement Price - Date

- Settlement Price - Price

- Trade - Ask Price

- Trade - Average Price

- Trade - Bench Price

- Trade - Bid Price

- Trade - Freight Price

- Trade - Indicative Auction Price

- Trade - Lower Limit Price

- Trade - Mid Price

- Trade - Odd-Lot Trade Price

- Trade - Original Price

- Trade - Price

- Trade - Trade Price Currency

- Trade - Upper Limit Price

- Auction - Volume

- Correction - Accumulated Volume

- Correction - Original Accumulated Volume

- Correction - Original Volume

- Correction - Volume

- Quote - Volume

- Trade - Accumulated Volume

- Trade - Advancing Volume

- Trade - Declining Volume

- Trade - Exchange For Physical Volume

- Trade - Exchange For Swaps Volume

- Trade - Fair Value Volume

- Trade - Indicative Auction Volume

- Trade - Odd-Lot Trade Volume

- Trade - Original Volume

- Trade - Total Buy Volume

- Trade - Total Sell Volume

- Trade - Total Volume

- Trade - Unchanged Volume

- Trade - Volume

The Description for these fields can be referenced in the data content guide.

We are going to use the following Tick History templates: Tick History Time and Sales

Establish a connection to Tick History via the API and using the input instrument to extract the fields from Tick History Time and Sales within the Tick History product.

Coding part!

Prerequisites

In order to start working with the LSEG Tick History - REST API, you can download the Tick History REST Tutorials Postman collection in the Tick History Downloads page, extract it, and import to Postman tool on your local machine, then you can start exploring the REST API with the collection and environment. In this article, we're using the Python code to execute the API call (You can also download the Tick History Python Code Samples in the Tick History Downloads page as well)

For more information on the overview, quick start guide, documentation, downloads, and tutorials, please have a look at LSEG Tick History - REST API Page

Preparation step

As a preparation step, we set these parameters and import necessary libraries

- filePath: Location to save the download file

- fileNameRoot: Root of the name for the downloaded file

- CREDENTIALS_FILE: The name of file that contains Tick History credential, we're going to read the credential from this file instead of putting it in the code directly

- useAws: Set the this parameter to

- False: to download from Tick History servers

- True: to download from Amazon Web Services cloud (recommended, it is faster)

Here's an example of the credential file (credential.ini)

[TH]

username = YOUR_TH_USERNAME

password = YOUR_TH_PASSWORD

Then import necessary libraries

import requests

import json

import configparser

import time

import pandas as pd

Authentication

Firstly, we need to establish a connection to the LSEG Tick History host to get the token using the credential read from the credential file. (The code to read credential from file can be found in the full code provided at the beginning of this article)

#Step 1: token request

requestUrl = "https://selectapi.datascope.lseg.com/RestApi/v1/Authentication/RequestToken"

requestHeaders={

"Prefer":"respond-async",

"Content-Type":"application/json"

}

requestBody={

"Credentials": {

"Username": myUsername,

"Password": myPassword

}

}

r1 = requests.post(requestUrl, json=requestBody,headers=requestHeaders)

if r1.status_code == 200 :

jsonResponse = json.loads(r1.text.encode('ascii', 'ignore'))

token = jsonResponse["value"]

print ('Authentication token (valid 24 hours):')

print (token)

else:

print ('An error occurred, error status code: ' + r1.status_code)

Extracting associated instrument

Next, let us use the TickHistoryTimeAndSalesExtractionRequest template to extract instrument data by sending an on demand extraction request using the received token

#Step 2: send an on demand extraction request using the received token

requestUrl='https://selectapi.datascope.lseg.com/RestApi/v1/Extractions/ExtractRaw'

requestHeaders={

"Prefer":"respond-async",

"Content-Type":"application/json",

"Authorization": "token " + token

}

requestBody={

"ExtractionRequest": {

"@odata.type": "#DataScope.Select.Api.Extractions.ExtractionRequests.TickHistoryTimeAndSalesExtractionRequest",

"ContentFieldNames": [

"Auction - Ask Price",

"Auction - Bid Price",

"Auction - Price",

"Correction - Ask Price",

"Correction - Bid Price",

"Correction - Original Price",

"Correction - Price",

"Quote - Ask Price",

"Quote - Bench Price",

"Quote - Bid Price",

"Quote - Fair Price",

"Quote - Far Clearing Price",

"Quote - Freight Price",

"Quote - Invoice Price",

"Quote - Lower Limit Price",

"Quote - Mid Price",

"Quote - Near Clearing Price",

"Quote - Price",

"Quote - Reference Price",

"Quote - Theoretical Price",

"Quote - Theoretical Price Ask",

"Quote - Theoretical Price Bid",

"Quote - Theoretical Price Mid",

"Quote - Upper Limit Price",

"Settlement Price - Date",

"Settlement Price - Price",

"Trade - Ask Price",

"Trade - Average Price",

"Trade - Bench Price",

"Trade - Bid Price",

"Trade - Freight Price",

"Trade - Indicative Auction Price",

"Trade - Lower Limit Price",

"Trade - Mid Price",

"Trade - Odd-Lot Trade Price",

"Trade - Original Price",

"Trade - Price",

"Trade - Trade Price Currency",

"Trade - Upper Limit Price",

"Auction - Volume",

"Correction - Accumulated Volume",

"Correction - Original Accumulated Volume",

"Correction - Original Volume",

"Correction - Volume",

"Quote - Volume",

"Trade - Accumulated Volume",

"Trade - Advancing Volume",

"Trade - Declining Volume",

"Trade - Exchange For Physical Volume",

"Trade - Exchange For Swaps Volume",

"Trade - Fair Value Volume",

"Trade - Indicative Auction Volume",

"Trade - Odd-Lot Trade Volume",

"Trade - Original Volume",

"Trade - Total Buy Volume",

"Trade - Total Sell Volume",

"Trade - Total Volume",

"Trade - Unchanged Volume",

"Trade - Volume"

],

"IdentifierList": {

"@odata.type": "#DataScope.Select.Api.Extractions.ExtractionRequests.InstrumentIdentifierList",

"InstrumentIdentifiers": [

{

"Identifier": "0005.HK",

"IdentifierType": "Ric"

}

],

"ValidationOptions": None,

"UseUserPreferencesForValidationOptions": False

},

"Condition": {

"MessageTimeStampIn": "GmtUtc",

"ApplyCorrectionsAndCancellations": False,

"ReportDateRangeType": "Range",

"QueryStartDate": "2023-01-03T05:00:00.000-05:00",

"QueryEndDate": "2023-01-03T06:00:00.000-05:00",

"DisplaySourceRIC": False

}

}

}

r2 = requests.post(requestUrl, json=requestBody,headers=requestHeaders)

#Display the HTTP status of the response

#Initial response status (after approximately 30 seconds wait) is usually 202

status_code = r2.status_code

print ("HTTP status of the response: " + str(status_code))

If required, poll the status of the request using the received location URL and once the request has completed, retrieve the jobId and extraction notes.

- As long as the status of the request is 202, the extraction is not finished, we must wait, and poll the status until it is no longer 202

- If status is 202, display the location url we received, and will use to poll the status of the extraction request

- When the status of the request is 200 the extraction is complete, we retrieve and display the jobId and the extraction notes (it is recommended to analyse their content))

- If instead of a status 200 we receive a different status, there was an error

#Step 4: get the extraction results, using the received jobId.

#We also save the compressed data to disk, as a GZIP.

#We only display a few lines of the data.

requestUrl = "https://selectapi.datascope.lseg.com/RestApi/v1/Extractions/RawExtractionResults" + "('" + jobId + "')" + "/$value"

#AWS requires an additional header: X-Direct-Download

if useAws:

requestHeaders={

"Prefer":"respond-async",

"Content-Type":"text/plain",

"Accept-Encoding":"gzip",

"X-Direct-Download":"true",

"Authorization": "token " + token

}

else:

requestHeaders={

"Prefer":"respond-async",

"Content-Type":"text/plain",

"Accept-Encoding":"gzip",

"Authorization": "token " + token

}

r4 = requests.get(requestUrl,headers=requestHeaders,stream=True)

#Ensure we do not automatically decompress the data on the fly:

r4.raw.decode_content = False

if useAws:

print ('Content response headers (AWS server): type: ' + r4.headers["Content-Type"] + '\n')

#AWS does not set header Content-Encoding="gzip".

else:

print ('Content response headers (Tick History server): type: ' + r4.headers["Content-Type"] + ' - encoding: ' + r4.headers["Content-Encoding"] + '\n')

#Next 2 lines display some of the compressed data, but if you uncomment them save to file fails

#print ('20 bytes of compressed data:')

#print (r4.raw.read(20))

print ('Saving compressed data to file:' + fileName + ' ... please be patient')

fileName = filePath + '/' + fileNameRoot + ".csv.gz"

chunk_size = 1024

rr = r4.raw

with open(fileName, 'wb') as fd:

shutil.copyfileobj(rr, fd, chunk_size)

fd.close

print ('Finished saving compressed data to file:' + fileName + '\n')

#Now let us read and decompress the file we just created.

#For the demo we limit the treatment to a few lines:

maxLines = 10

print ('Read data from file, and decompress at most ' + str(maxLines) + ' lines of it:')

uncompressedData = ""

count = 0

with gzip.open(fileName, 'rb') as fd:

for line in fd:

dataLine = line.decode("utf-8")

#Do something with the data:

print (dataLine)

uncompressedData = uncompressedData + dataLine

count += 1

if count >= maxLines:

break

fd.close()

#Note: variable uncompressedData stores all the data.

#This is not a good practice, that can lead to issues with large data sets.

#We only use it here as a convenience for the next step of the demo, to keep the code very simple.

#In production one would handle the data line by line (as we do with the screen display)

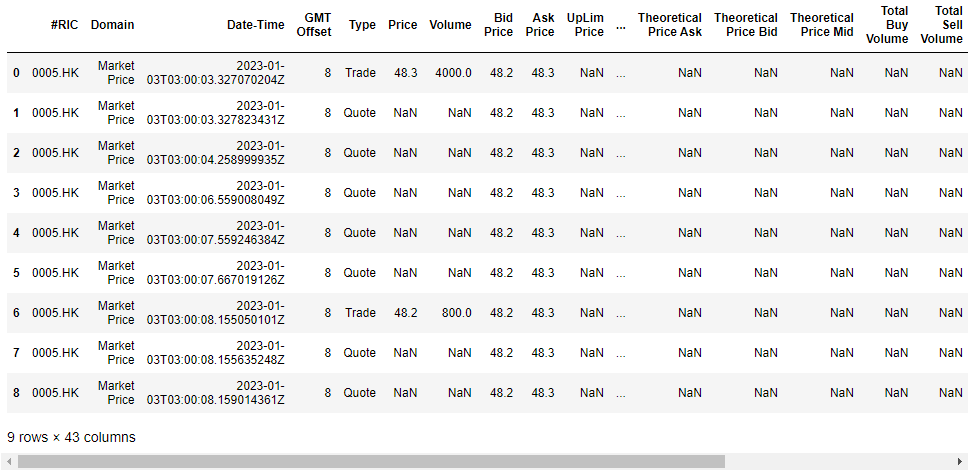

Results

Finally, display the results in the console:

#Step 5 (cosmetic): formating the response received in step 4 or 5 using a panda dataframe

from io import StringIO

import pandas as pd

TimeAndSales = pd.read_csv(StringIO(uncompressedData))

TimeAndSales

Conclusion

Automating similar routines with Tick History is quick and simple, offering you greater flexibility, and is more efficient than the manual use of Tick History via the browser.

- Register or Log in to applaud this article

- Let the author know how much this article helped you