Updated: 04 Mar 2025

Introduction

Power BI is a business analytic service providing interactive visualizations. Supported data sources include a wide range of file types, databases, Microsoft Azure services, and many other third-party online services. A common flow of work in Power BI begins by connecting to data sources and building a report in Power BI Desktop. That report is then published from Desktop to Power BI service and shared so users in service and mobile can consume (view and interact with) the report.

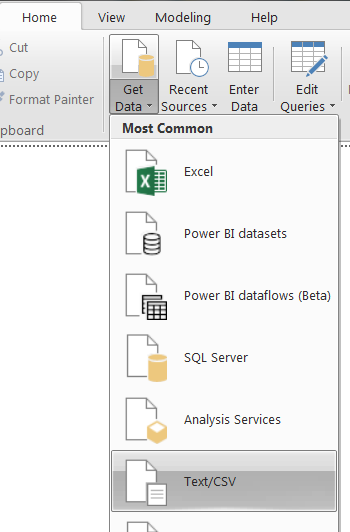

Power BI Desktop supports data sources including a wide range of file types, databases, Microsoft Azure services, and many other third-party online services. The supported data source is available under the ‘GetData’ Ribbon.

In case that data source is not available in the list, users may import data from well-known file formats (i.e. text/CSV, XML, JSON). The DataScope Select/ ThickHistory provide data in CSV format, so users can generate data in Web GUI, and then import the file to Power BI Desktop. Anyway, users can also develop “Query Editor” in Power Query Formula Language to connect to new data source. Microsoft also provides a Custom Connectors SDK for third parties to create their own data connectors and seamlessly plug them into Power Query.

In this article, we are going to demonstrate how to create a new custom data connector to connect to Datascope Select/Tick History product via DSS REST API. The new connector extracts Tick History ElektronTimeSeries and DSS IntradayPricing report template data via DSS REST API, so the basic understanding of DSS REST API is required. We would recommend reviewing the Connecting to Server and On Demand data extraction workflow tutorials first.

Quick Start

To help you understand how the connector works, below are the steps to use the custom connector.

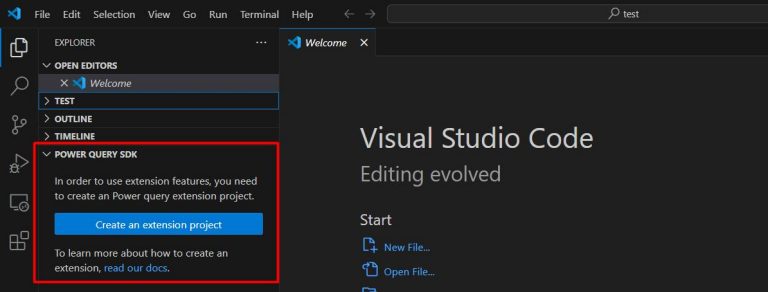

1. Install the Power Query SDK for Visual Studio.

To develop a Custom Connector, the Power Query SDK needs to be installed. The SDK is available in Visual Studio Market Place. It works with Visual Studio Code. The SDK will be add in the Visual Studio Code.

The template contains the following files:

- Connector definition file (.pq) – contain connector logic.

- A Query test file (.query.pq) – implement query to test the connector.

- A String resource file (resources.resx) – contain resources used by the connector (i.e. string description)

- PNG files of various sizes used to create icons

2. Install Power BI Desktop.

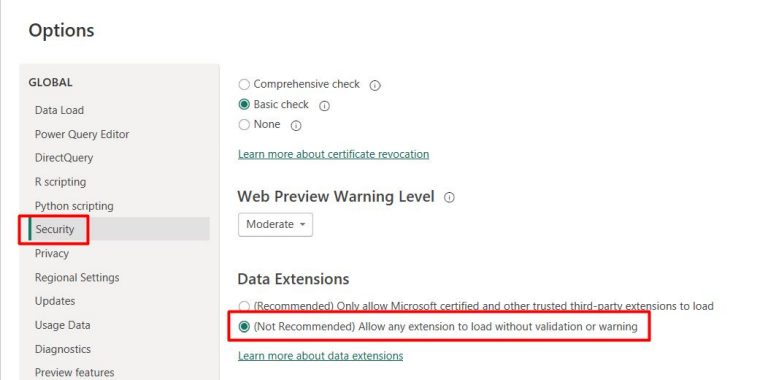

3. Change Security setting in Power BI Desktop, select File > Options and settings > Options > Security to allow an uncertified extension to load without validation or warning.

4. Build and deploy the connector

The sample code and projects file can be downloaded from this link. Once the package is unzipped, please open and build the project on the Visual Studio Code. The extension file (.mez) will be created in the “bin\AnyCPU\Debug” folder. Please copy the file to the “[Documents]\Microsoft Power BI Desktop\Custom Connectors” folder and then restart the Power BI Desktop.

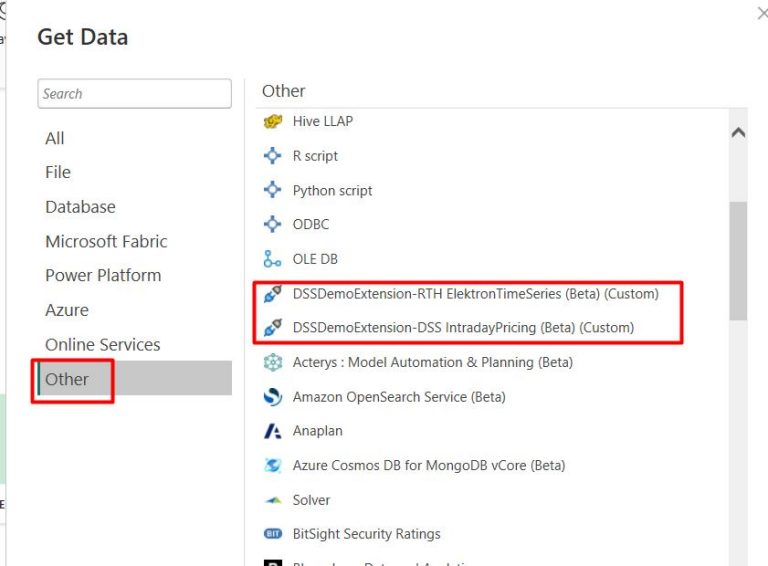

6. In Power BI Desktop, select the “Get Data” -> “Other”. You will see that new extensions are added.

- DSSDemoExtension-RTH ElektronTimeSeries (Beta) - retrieve Time Series historical data for last 30 days via the Tick History ElektronTimeSeries template. The extension accepts a RIC string as input.

- DSSDemoExtension-DSS IntradayPricing (Beta) – retrieve current market data via DSS IntradayPricing for three defined currency RICs: JPY=, EUR=, INR=.

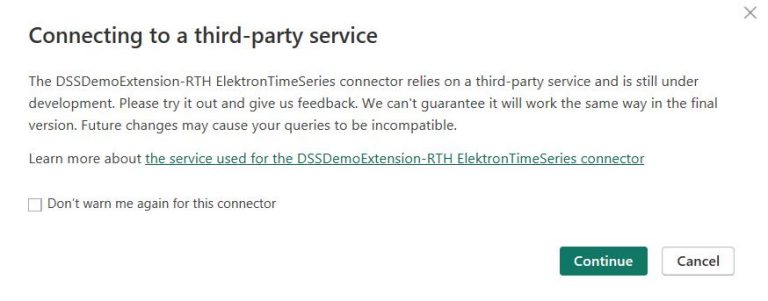

7. Select the Tick History ElektronTimeSeries which retrieve last 30 days of timeseries for a specific RIC. The extension is a third-party service, so the following warning pop-up about will be displayed. Select “Continue”.

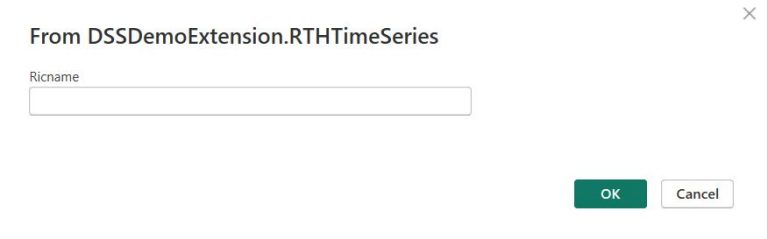

Then, the following UI to input parameters for the connector will be displayed. The created connectors for Tick History TimeSeries data accept a parameter for RIC name.

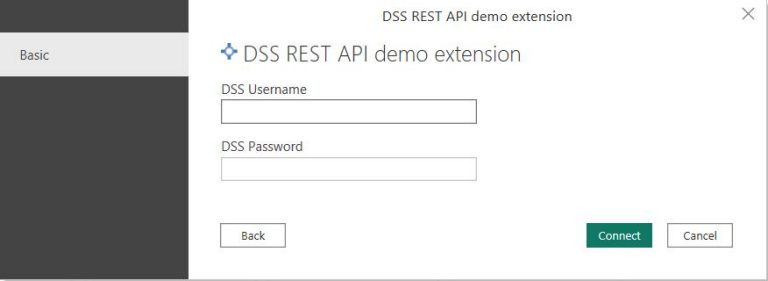

After that, the Credential UI will be displayed to input the DSS Username and Password. Input the DSS Username and Password and click “Connect”. The username and password will be provided to LSEG Tick History customers and are valid both in the API and the GUI.

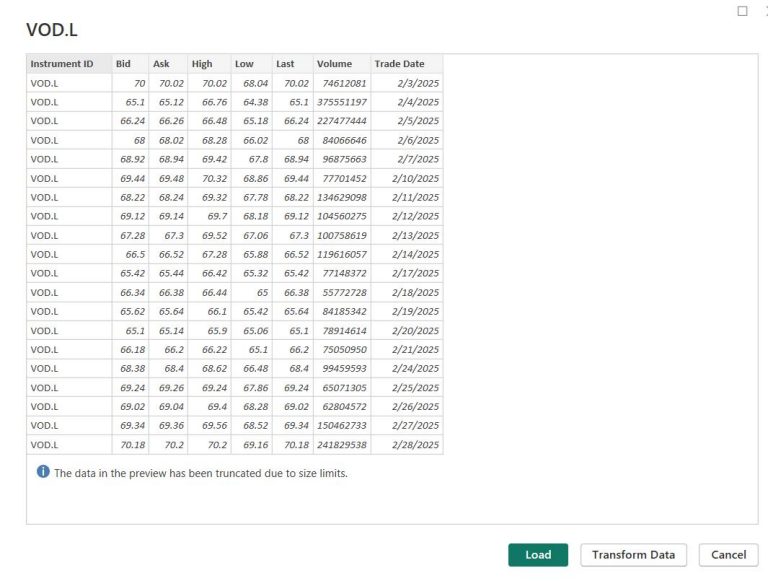

Finally, the extracted data will successfully be loaded into Power BI Desktop. The preview data is below.

How to develop the connector

Next, we are going to provide an explanation on how this extension is implemented so that you are able to modify the request as needed.

Please note that this article will not describe the Connector Extension implementation and Power Query in detailed. If you need detailed information, please look into the Power Query SDK Overview and Develop a connector using the Power Query SDK.

Define credential

An extension can support one or more kinds of Authentication (such as OAuth, Windows, etc). Once the extension is loaded, Authentication UI will be displayed in the format of Authentication kinds defined in the extension. DSS REST API normally uses DSS Username and Password to get a token for any API capability access, so we use the “UsernamePassword” kind. For more information about Authentication kinds, please see this link.

// Data Source Kind description

DSSDemoExtension = [

Authentication = [

UsernamePassword = [

UsernameLabel="DSS Username",

PasswordLabel="DSS Password"]

],

Label = Extension.LoadString("DataSourceLabel")

];

On Demand extraction

This extension follows the same On Demand extraction workflow of DSS REST API as other DSS REST API. The steps in the workflow are shown below.

- Request authentication token

- Request historical data from the server, using an on demand request

- Check the request status

- Retrieve the data

The main connector function is created following these steps.

First, it requests a token with Username, Password, and a current date and returns a token string. The reason for passing the current date will be explained later. We then use the token string for all API calls.

Next, in the ExtractRTH_TimeSeries() function, the extension requests historical time series data using an on demand request. The function will also check the request status and finally returns the jobId string. Anyway, if the request is unsuccessful, the function will return the error object with detailed information.

Finally, if the request is successful, the extension will call GetData function to load the data.

[DataSource.Kind="DSSDemoExtension", Publish="DSSDemoExtension.Publish1"]

shared DSSDemoExtension.RTHTimeSeries = (Ricname as text) =>

let

DSSUserName = Extension.CurrentCredential()[Username],

DSSPassword = Extension.CurrentCredential()[Password],

currentdate = Date.ToText(DateTime.Date(DateTime.LocalNow())),

//1) Request authentication token

token = Login(DSSUserName,DSSPassword,currentdate),

//2) Request historical data from the server, using an on demand request and 3) Check the request status

jobId = ExtractRTH_TimeSeries(token,Ricname),

//4) Retrieve the data

source = if Value.Is(jobId, type text) then

GetData(token,jobId)

else

jobId

in

source;

Login() function sends a token request to the RequestToken endpoint with DSS username and password. Any REST API request is sent using the Web.Contents function which supports both HTTP POST and GET. If the Content is defined, it will use POST.

According to this helpful blog, using Web.Contents, Power BI automatically caches HTTP response and then gets the response from the cache once the same request was made. This means that Power BI always reuse the token from the cache since the DSS Username and Password are rarely changed. However, the token is only valid for 24 hours. Reusing the token can cause “401” authorization errors. To avoid this issue, we need to add the current date in a header to make the request different from one in the cache.

// Request authentication token

Login = (user,pass,currenttime) =>

let

endpoint = "https://selectapi.datascope.refinitiv.com/RestApi/v1/Authentication/RequestToken",

body = "{""Credentials"": {""Username"": """ & user & """,""Password"": """ & pass & """}}",

response=Web.Contents(endpoint,[

Headers=[#"Content-Type"="application/json",

MyHeader=currenttime],

Content=Text.ToBinary(body)]),

responseBody= Json.Document(response),

value = responseBody[value]

in

"Token"&value;

ExtractRTH_TimeSeries() function sends On Demand extraction request to ExtractRaw endpoint. The body is for TimeSeries data with 30Days ago condition. You can modify the request body as you want. For other report templates and samples, please see these tutorials and API Reference tree.

ExtractRTH_TimeSeries = (token, ric) =>

let

endpoint = "https://selectapi.datascope.refinitiv.com/RestApi/v1/Extractions/ExtractRaw",

body =

"{

""ExtractionRequest"":

{

""@odata.type"": ""#DataScope.Select.Api.Extractions.ExtractionRequests.ElektronTimeseriesExtractionRequest"",

""ContentFieldNames"":

[

""Instrument ID"",

""Bid"",

""Ask"",

""High"",

""Low"",

""Last"",

""Volume"",

""Trade Date""

],

""IdentifierList"":

{

""@odata.type"": ""#DataScope.Select.Api.Extractions.ExtractionRequests.InstrumentIdentifierList"",

""InstrumentIdentifiers"":

[

{

""Identifier"":"""& ric &""",

""IdentifierType"":""Ric""

}

]

},

""Condition"":

{

""ReportDateRangeType"":""Relative"",

""RelativeEndDaysAgo"": 1,

""RelativeStartDaysAgo"": 30

}

}

}",

response = Web.Contents(

endpoint,

[Headers=

[#"Authorization"=token,

#"Content-Type"="application/json",

#"Prefer"= "respond-async;wait=1"],

Content=Text.ToBinary(body),

ManualStatusHandling={400,202}]),

status = Value.Metadata(response)[Response.Status],

jobId=if status = 200 then Json.Document(response)[JobId]

else if status = 202 then CheckStatus(token,Value.Metadata(response)[Headers][#"Location"])

else Error.Record("HTTP Status",Text.From(status), Json.Document(response)[error][message])

in

jobId;

On Demand extraction requests are executed as soon as possible. There is no guarantee on the delivery time, it depends on the amount of requested data, and the server load.

In the request, we set a preference for an asynchronous response. We will get a response in 30 seconds (default wait time) or less. If the extraction complete within 30 seconds, the server will respond with 200 OK status. The connector will get “JobId” from response’s body.

Otherwise, it will return 202 Accepted status which means processing has not yet completed. The response’s header contains “Location” which is used to check request status. The connector will get value from “Location” header and repeatedly check status in the CheckStatus() function. Once the process is complete, the 200 OK status will be received with “JobId” in the response.

The Value.WaitFor is a helper function which is useful when making an asynchronous HTTP request, and you need to poll the server until the request is complete.

CheckStatus = (token,location) =>

let

jobId = Value.WaitFor(

(iteration) =>

let

url = location,

response = Web.Contents(url, [Headers=[Authorization=token, #"Content-Type"="application/json"],ManualStatusHandling={202}]),

status = Value.Metadata(response)[Response.Status],

jobId=if status = 200 then Json.Document(response)[JobId]

else null

in

jobId,

(iteration) => #duration(0, 0, 0, Number.Power(2, iteration)),5)

in

jobId;

It is also possible that a 400 Bad Request with an error message is received when the request is invalid. The connector will verify, and then return Error.Record instead of JobId string.

For the last step, if the request is valid and complete, the GetData() will get the extracted result using the JobId from the previous step. The result normally is CSV format compressed in GZIP. However Power BI automatically decompresses the result, and then the connector converts the string data to CSV format.

GetData = (token,jobId) =>

let

endpoint = "https://selectapi.datascope.refinitiv.com/RestApi/v1/Extractions/RawExtractionResults('" & jobId & "')/$value",

Source = Csv.Document(Web.Contents(

endpoint,

[Headers=

[Authorization=token,

#"Content-Type"="application/json"]

]),

[Delimiter=",", Encoding=1252, QuoteStyle=QuoteStyle.None])

in

Source;

Troubleshooting

Running Power Query in Visual Studio with Power Query SDK

With the Power Query SDK, you can run the debugger for the connector development. The debugger will run on the ".query.pq" file extension which generally calls the connector extension. The output is similar to the result generated on the Power Query Desktop. Below is the DSSDemoExtension.query.pq file.

// Use this file to write queries to test your data connector

let

Source = DSSDemoExtension.RTHTimeSeries("JPY=")

in

Source

Package sniffer

When I try to develop this connector and find an issue, Power Query SDK provides limited information. I use a network sniffer such as Fiddler tool to monitor requests and responses sent between the connector and server. With the sequence of requests/responses, you can also use them to replicate issue using Postman. Some useful information is provided in this blog.

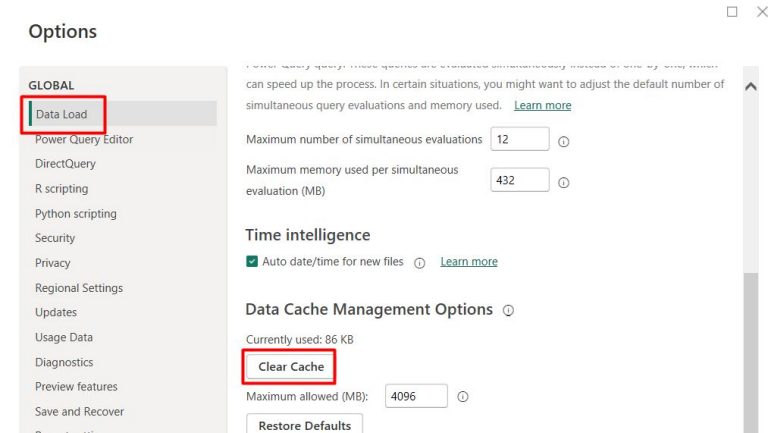

Power BI Desktop cache

In some scenarios, cache management of Power BI Desktop may cause unexpected behavior. You may try to clear cache to see if the issue can be solved. Please follow the steps in this link for instructions.

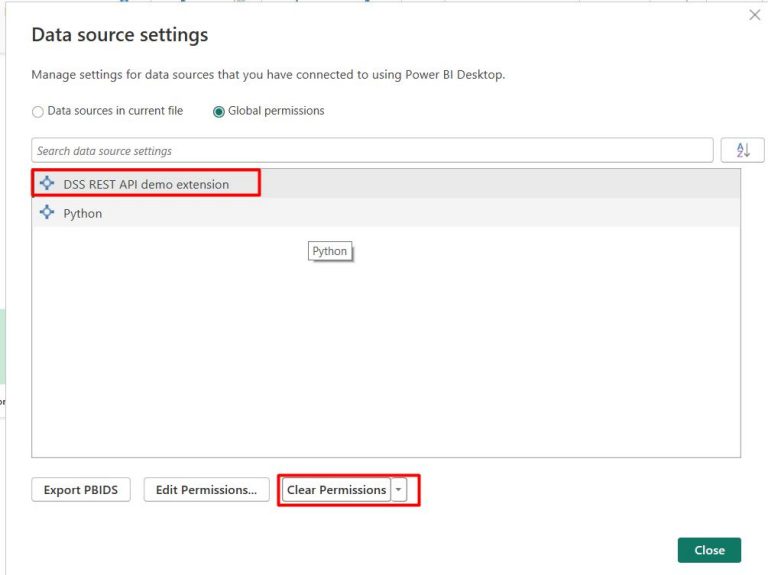

Clear Permissions

To clear DSS credentials, open the Data source settings from the Options and Settings. Then, select the DSS REST API demo extension, and click the Clear Permissions button.

Summary

In this article, we demonstrate how to develop a connector extension for Power BI to connect to Tick History/DSS data. It uses DataScope Select REST API to connect, retrieve data using On Demand extraction method. This allows Tick History/DSS data integration in Power BI. You can add/modify the connector to extract other report templates to serve your requirements.

Reference

DataScope Select REST API Tutorial

Tick History REST API Tutorial

Connector extensibility in Power BI